Alignment Jam: Interpretability July 14-16

Pátek, 14. července 2023

18:00

WHERE

LANGUAGE

Organiser

Jan Provazník

Join us to understand the internals of language models and ML systems! Please RSVP: https://forms.gle/Gq27fKFnYV9ciofy8

Prague and more than 30 locations around the world are going to research the interpretability of ML systems as a part of the Interpretability Hackathon 3.0.

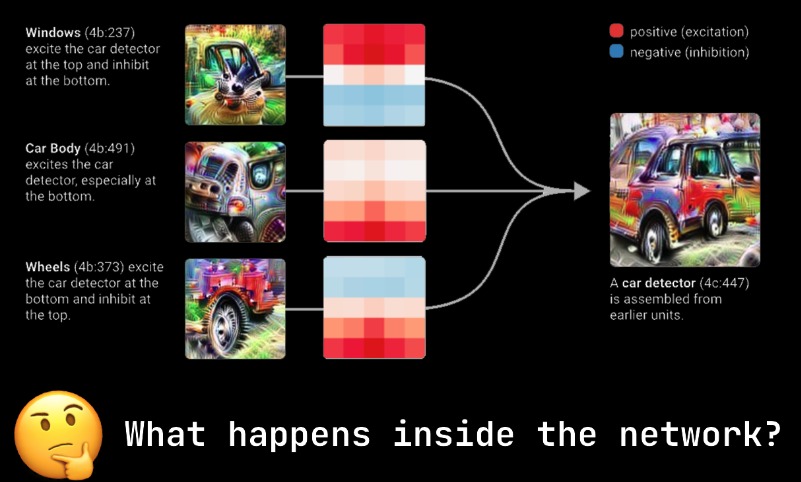

Machine learning is becoming an increasingly important part of our lives and researchers are still working to understand how neural networks represent the world.

Mechanistic interpretability is a field focused on reverse-engineering neural networks. This can both be how Transformers do a very specific task and how models suddenly improve. Check out our speaker Neel Nanda’s 200+ research ideas in mechanistic interpretability.

Check out provided resources on the topic:

Zoom In: An Introduction to Circuits

200 Concrete Open Problems in Mechanistic Interpretability

https://twitter.com/kevrowan/status/1587601532639494146